Research Projects

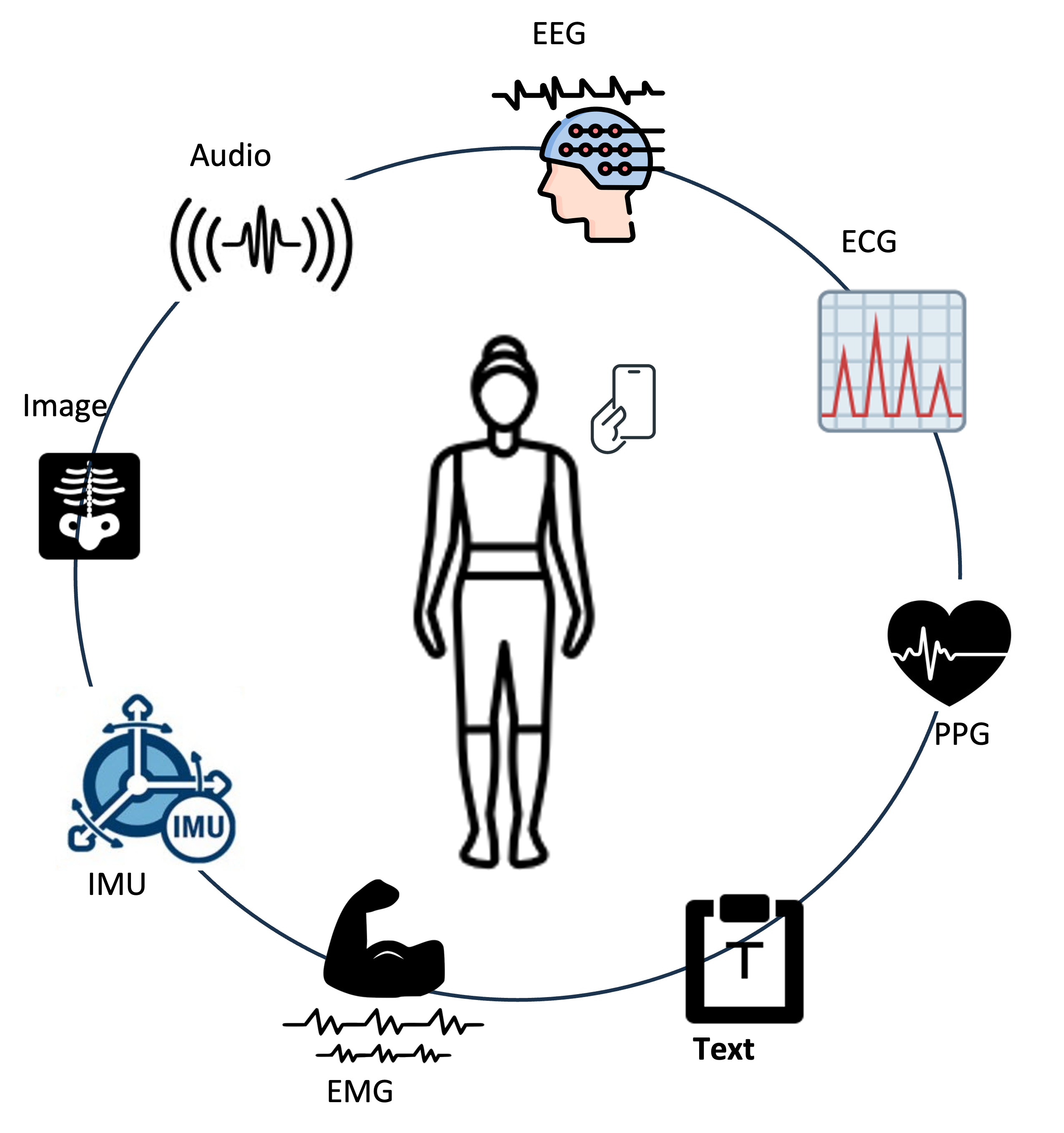

Mobile Health Diagnosis

Screening, monitoring, and progression forecasting on phones and wearablesThis line of work targets diagnosis and longitudinal health monitoring in ubiquitous settings: screening, risk stratification, and forecasting of disease course from data collected outside the clinic. We combine mobile and wearable sensing with machine learning so that timely signals can support early intervention. Audio is one important modality—for example cough, breath, and voice—for respiratory, affect-related, and mental health contexts, together with other physiological or behavioural cues where appropriate. The emphasis is on end-to-end clinical and public-health questions in mobile health, rather than generic modelling of structured time series or tabular records; those methodological themes are developed separately under structured health data modelling.

Project members: Hongyu Jin, Ye Bai

Relevant Publications:

- Dang, T., Han, J., Xia, T., Bondareva, E., Brown, C., Chauhan, J., Grammenos, A., Spathis, D., Cicuta, P., and Mascolo, C.

Conditional Neural ODE Processes for Individual Disease Progression Forecasting: A Case Study on COVID-19, KDD 2023. - Dang, T., Han, J., Xia, T., Spathis, D., Bondareva, E., Brown, C., Chauhan, J., Grammenos, A., Hasthanasombat, A., Floto, A., Cicuta, P., and Mascolo, C.

Exploring longitudinal cough, breath, and voice data for COVID-19 progression prediction via sequential deep learning: model development and validation, JMIR, 2023 - Dang, T., Ghosh, A., Spathis, D., and Mascolo, C.

Human-centered AI for mobile health sensing: challenges and opportunities, Royal Society Open Science, 2023 - Han, J., Xia, T., Spathis, D., Bondareva, E., Brown, C., Chauhan, J., Dang, T., Grammenos, A., Hasthanasombat, A., Floto, A., Cicuta, P., and Mascolo, C.

Sounds of COVID-19: exploring realistic performance of audio-based digital testing. , NPJ digital medicine, 2022

Speech and Voice Intelligence

AI for speech perception, generation, and adaptation in conversational systemsThis project focuses on building speech and voice intelligence across the full pipeline of perception and generation: automatic speech recognition, text-to-speech, speaker and style adaptation, and conversational interaction. We are particularly interested in modelling ambiguity and uncertainty in speech signals, and in developing robust, efficient, and trustworthy speech foundation models that can generalise across domains, speakers, and affective states. By combining speech processing with large language models and multimodal signals, we aim to enable next-generation conversational AI systems that listen, speak, and adapt in a human-centred way for health and everyday applications.

Project members: Yang Xiao, Siyi Wang, Jiaheng Dong

Relevant Publications:

- Dong, J., Jia, H., Chatterjee, S., Ghosh, A., Bailey, J., Dang, T.

E-BATS: Efficient Backpropagation-Free Test-Time Adaptation for Speech Foundation Models, NeurIPS 2025 - Dang, T., Gao, Y., Jia, H.

Test-time Scaling for Auditory Cognition in Audio Language Models, ICASSP 2026 - Halim, J., Wang, S., Jia, H., Dang, T.

Token-Level Logits Matter: A Closer Look at Speech Foundation Models for Ambiguous Emotion Recognition, INTERSPEECH 2025 - Hong, X., Gong, Y., Sethu, V., Dang, T.

AER-LLM: Ambiguity-aware Emotion Recognition Leveraging Large Language Models, ICASSP 2025 - Zhang, W., Jin, H., Wang, S., Wei, Z., Dang, T.

Scaling Ambiguity: Augmenting Human Annotation in Speech Emotion Recognition with Audio-Language Models, ICASSP 2026

Structured Data Modelling for Health

Learning from structured health data with a focus on time seriesMany health data sources, such as ECGs, EEGs, acoustic signals, electronic health records, and derived clinical features, are naturally structured and often longitudinal. This project investigates representation learning for such structured health data, with a particular emphasis on health time series. We study self-supervised and test-time adaptation methods that can handle distribution shifts (e.g., missingness, motion artefacts, population differences) and aim to build models that are robust, data-efficient, and clinically meaningful. The goal is to improve downstream tasks such as risk prediction, disease trajectory modelling, and personalised decision support.

Project members: Jie Huang

Relevant Publications:

- Wu, Y., Dang, T., Spathis, D., Jia, H., and Mascolo, C.

StatioCL: Contrastive Learning for Time Series via Non-Stationary and Temporal Contrast, CIKM 2024 - Dang, T., Dimitriadis, A., Wu, J., Sethu, V., and Ambikairajah, E.

Constrained dynamical neural ode for time series modelling: A case study on continuous emotion prediction, ICASSP, 2023 - Xia, T., Dang, T.,, Han, J., Qendro, L., and Mascolo, C.

Uncertainty-aware Health Diagnostics via Class-balanced Evidential Deep Learning, IEEE Journal of Biomedical and Health Informatics, 2024 - Jia, H., Kwon, Y., Orsino, A., Dang, T., Talia, D., and Mascolo, C.

TinyTTA: Efficient Test-time Adaptation via Early-exit Ensembles on Edge Devices, NeurIPS 2024

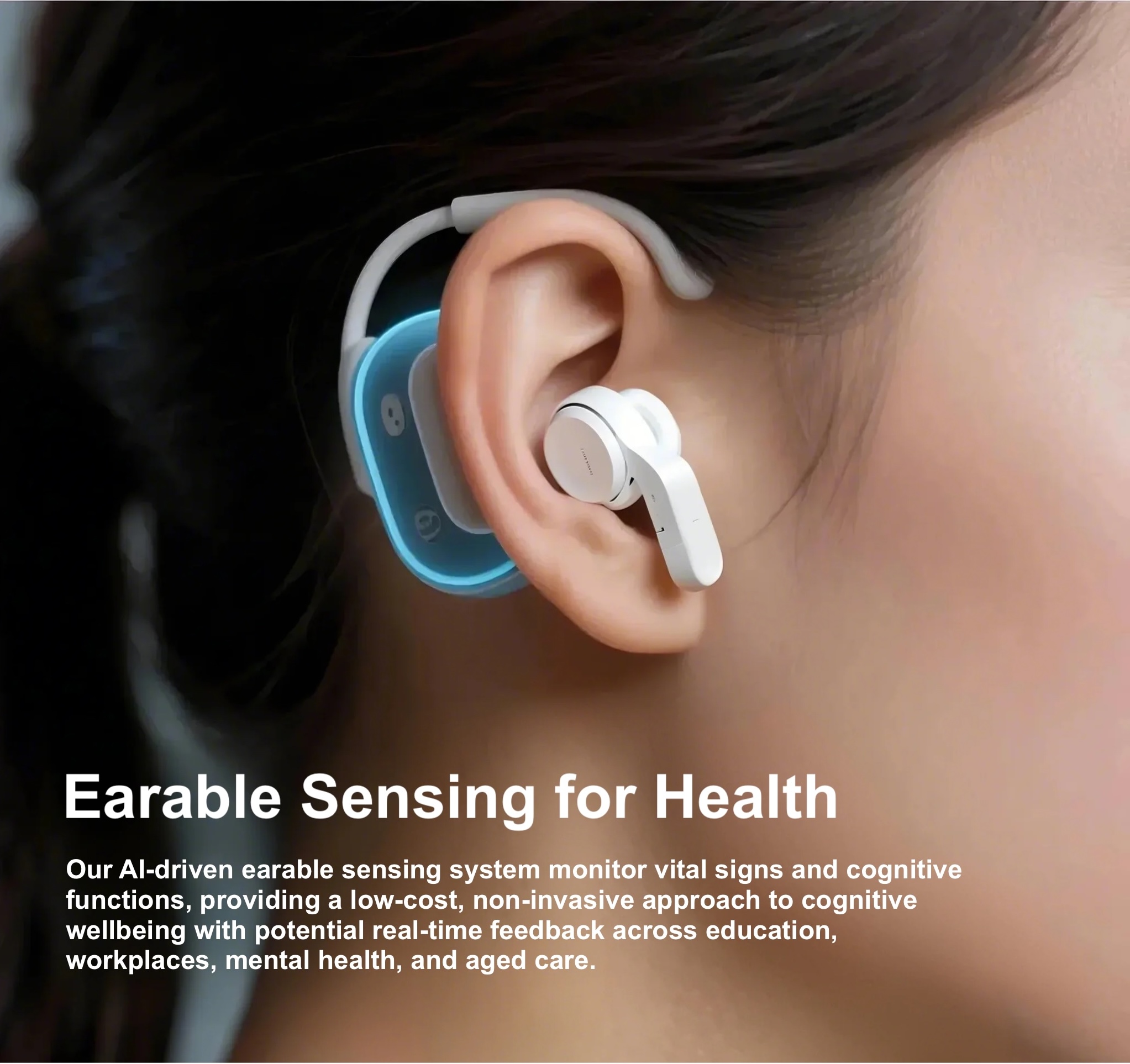

Earable Sensing

Multimodal sensing for continuous monitoring of vital signsThis project aims to explore the potential of earable devices for continuous monitoring of vital signs. By leveraging multimodal sensing technologies integrated into earable devices, we seek to develop non-invasive methods for tracking various physiological parameters. The research focuses on overcoming challenges related to signal quality, power efficiency, and user comfort, while ensuring accurate and reliable health monitoring in everyday settings.

Project members: You Zuo

Relevant Publications:

- Quan, J., Al-Naimi, K., Wei, X., Liu, Y., Montanari, A., Dang, T.

Cognitive Load Monitoring via Earable Acoustic Sensing, ICASSP 2025 - Wei, X., Dang, T.*, Al-Naimi, K., Liu, Y., Kawsar, F., Montanari, A.

Listening to the Mind: Earable Acoustic Sensing of Cognitive Load, Companion of 2025 ACM UbiComp/ISWC - Romero, J., Ferlini, A., Spathis, D., Dang, T., Farrahi, K., Kawsar, F., and Montanari, A.

OptiBreathe: An Earable-based PPG System for Continuous Respiration Rate, Breathing Phase, and Tidal Volume Monitoring, HotMobile 2024 - Ma, D., Dang, T., Ding, M., and Balan, R.

ClearSpeech: Improving Voice Quality of Earbuds Using Both In-Ear and Out-Ear Microphones, UbiComp, 2024 - Butkow, K., Dang, T., Ferlini, A., Ma, D., and Mascolo, C.

hEARt: Motion-resilient Heart Rate Monitoring with In-ear Microphones, PerCom 2023